10 Key Facts About the AI-Driven Memory Shortage: Samsung and SK hynix Warn of Extended Scarcity

Samsung and SK hynix warn AI-driven HBM shortages may last through 2027, with customers reserving years ahead, tightening DRAM markets, and fueling record profits.

The artificial intelligence revolution is placing unprecedented strain on the global memory chip market. Samsung and SK hynix, the world's top memory manufacturers, have issued stark warnings that severe shortages of high-bandwidth memory (HBM) — the specialized chips essential for AI servers — could persist well into 2027 and beyond. Demand for HBM is exploding as tech giants train massive language models and deploy AI inference at scale. Customers are already reserving supply years ahead, tightening the broader DRAM market and fueling record profits for suppliers. This article unpacks ten critical insights into the current memory landscape.

1. HBM Demand Is Growing Exponentially

High-bandwidth memory has become the backbone of AI accelerators like NVIDIA's H100 and B200 GPUs. Samsung and SK hynix report that HBM demand is rising at a compound annual growth rate (CAGR) exceeding 60% through 2027. Each new AI model generation requires exponentially more memory bandwidth. For instance, a single NVIDIA H100 GPU uses six HBM3 stacks, and next-generation platforms will require even more. This insatiable appetite is outstripping supply even as both companies ramp up production.

2. Advanced Packaging Capacity Is a Major Bottleneck

HBM manufacturing relies on through-silicon vias (TSVs) and advanced stacking techniques. Samsung and SK hynix have invested billions in 3D IC packaging, but capacity remains tight. The process of stacking up to 12 DRAM dies vertically and bonding them to a logic chip is complex and yields are still maturing. Industry analysts estimate that packaging constraints limit HBM output growth to 20-30% annually, far below demand. This bottleneck is a key reason shortages are expected to persist into 2027.

3. Customers Are Locking in Multi-Year Contracts

To secure supply, major cloud providers and AI companies have begun signing multi-year reservation agreements with Samsung and SK hynix. These contracts lock in allocation for 2025, 2026, and even 2027. Some customers have prepaid hundreds of millions of dollars. This forward booking is unprecedented in the memory industry, historically characterized by spot markets and quarterly negotiations. It signals that the AI sector views HBM as a strategic resource with limited alternatives in the near term.

4. Record Profits for Memory Makers

Thanks to HBM's premium pricing — roughly three to five times that of standard DRAM — both Samsung and SK hynix have seen operating margins soar. SK hynix swung from losses in 2023 to record profits in 2024, and Samsung's semiconductor division posted its highest profits in years. Analyst forecasts suggest combined net income from HBM alone could exceed $30 billion by 2026. The companies are using these windfalls to fund further expansion, but even aggressive capacity adds take 18-24 months.

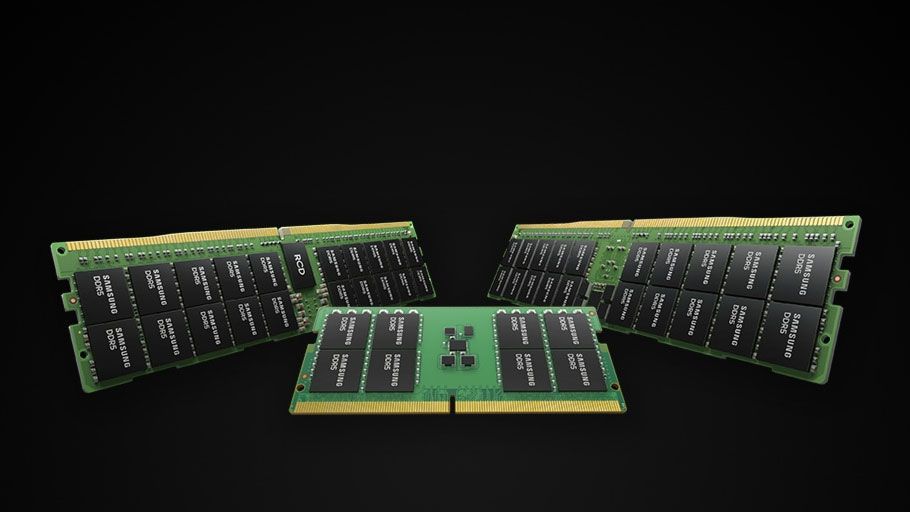

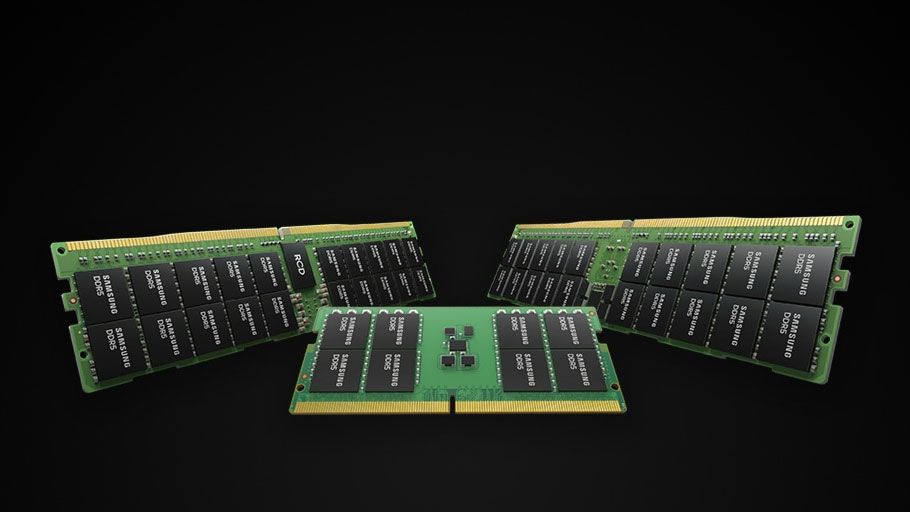

5. Broader DRAM Market Feels the Squeeze

As HBM production consumes more DRAM wafers — each HBM stack uses eight to twelve 1Z-nm or 1A-nm die layers — less capacity remains for conventional DDR5 and LPDDR parts. Samsung has reallocated up to 30% of its DRAM fab output to HBM, driving up prices across the entire DRAM segment. PC and smartphone makers report longer lead times and higher costs. The knock-on effect is a tightening of the memory market beyond just AI workloads, affecting consumer electronics and enterprise servers alike.

6. Investment in New Fabs Won't Solve It Quickly

Both Samsung and SK hynix have announced massive greenfield fab projects — Samsung's Pyeongtaek expansion and SK hynix's M15X in Cheongju. However, these facilities require 24-30 months to come online. Moreover, HBM is not just about fab capacity; it also demands advanced bumping, test, and assembly infrastructure. Even after fabs are built, achieving high HBM yield can take another year. This timeline means supply relief is unlikely before 2026 at the earliest, keeping the market tight through at least 2027.

7. HBM3E and HBM4 Push the Technology Frontier

To meet AI's bandwidth needs, SK hynix is ramping HBM3E (8 Gbps per pin) while Samsung is developing HBM4 with 16-plus stacks and speeds exceeding 10 Gbps. Each successive generation demands finer die-to-die interconnects and more sophisticated thermal management. The technological leap increases R&D costs and time-to-market. While these innovations will eventually boost capacity per wafer, they also add complexity that could prolong the imbalance between supply and demand.

8. Geopolitical Factors Add Uncertainty

Memory supply chains are highly concentrated in South Korea, with Samsung and SK hynix controlling over 90% of HBM production. Trade tensions between the US and China have led to export controls on advanced chips and equipment. South Korean firms must navigate complex rules when selling to Chinese AI customers. Meanwhile, the US CHIPS Act aims to build domestic HBM capacity, but it will take years to materialize. Geopolitical disruptions could worsen shortages or create regional mismatches.

9. Supply Diversification Remains Limited

Micron is the only other serious HBM contender, but its capacity is far smaller. Intel and Taiwan's ASE Technology are exploring packaging services, but they lack DRAM fabs. New entrants like China's CXMT are years behind. For the foreseeable future, Samsung and SK hynix will remain the primary sources. This oligopoly gives them pricing power but also makes the market vulnerable to outages — a single fab incident could tighten supply by double digits overnight.

10. The Memory Cycle May Be Permanently Altered

Historically, memory chips followed boom-bust cycles driven by PC and smartphone demand. AI is changing that. HBM's captive demand from hyperscalers provides a stable, growing base. Inventory levels are unlikely to spike into oversupply as in previous cycles because customers are contractually bound to take delivery. If AI adoption continues, the industry could enter a period of persistent scarcity and higher margins, fundamentally reshaping the business model of memory manufacturers.

Conclusion: The warnings from Samsung and SK hynix are not merely tactical — they reflect a structural shift in the memory market. AI's ravenous hunger for bandwidth has turned HBM into the most constrained semiconductor component of the decade. While investments are pouring in, the combination of packaging bottlenecks, long fab timelines, and multi-year customer commitments ensures that shortages will persist through 2027 and likely beyond. For businesses relying on AI infrastructure, securing long-term memory supply is now just as critical as acquiring GPUs.